Introduction

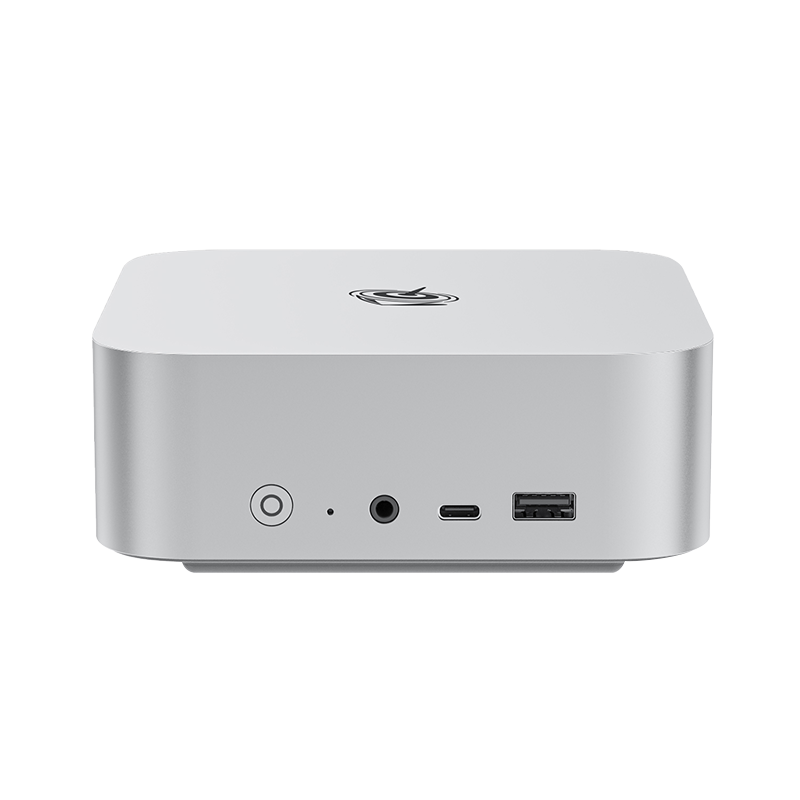

This comprehensive guide will walk you through the process of setting up Open WebUI with the Gemma 4 model on your Beelink mini PC with Windows 11. By the end of this tutorial, you'll have a fully functional local AI chat interface running completely offline on your computer.Why this Setup

This setup combines the strengths of Open WebUI with the intelligence of Gemma 4 to deliver a powerful, privacy‑focused AI environment. Open WebUI provides a ready‑to‑use chat interface with Markdown support, code highlighting, and organized history, while serving as a unified portal to manage multiple Ollama models. It also enables advanced features like model customization, Retrieval‑Augmented Generation, local file uploads, and knowledge base creation, making it ideal for both personal and team use. With multi‑user support, role‑based access control, and secure offline deployment, it ensures collaboration without compromising data privacy. Choosing Gemma 4 enhances this experience further: its advanced reasoning, efficiency, and multi‑modal capabilities allow it to handle text, code, audio, vision and complex tasks seamlessly, making it a highly intelligent model that pairs perfectly with Open WebUI for versatile, secure, and high‑performance AI applications.

Step-by-Step Installation Guide

Step 1: Install Ollama

Ollama is the backend service that will run your AI models.

1.1 Download Ollama

- Visit the official Ollama download page: https://ollama.com/download/windows

- Download the OllamaSetup.exe installer

1.2 Install Ollama

- Double-click the downloaded installer file, and follow the on-screen instructions to complete installation.

- Ollama will automatically run in the background after installation (you can verify by checking for the white llama icon in your taskbar).

Step 2: Download and Run Gemma 4 Model

Before you start, we strongly recommend that you select the Gemma 4 model based on the hardware of your Beelink mini PC for the best performance:

| Processors | Total RAM | Recommended LLM |

| AMD Ryzen 7 7735U/7735HS Intel Core i5-1235U Intel Core i5-13500H |

>=24GB | Gemma-4-E2B |

| Intel Core Ultra 9 185H/285H AMD Ryzen 7 8745HS/8845HS AMD Ryzen 7 H 255 AMD Ryzen 7 260 |

>=24GB | Gemma-4-E4B |

|

AMD Ryzen AI Max+ 395 |

>=32GB | Gemma-4-26B-MOE |

2.1 Open Command Prompt

- Press Win + R on your keyboard, type cmd and press Enter

2.2 Download Gemma 4

- In Command Prompt, enter the following command:

ollama run gemma4:e2b

ollama run gemma4:e4b

ollama run gemma4:26b - Ollama will download the Gemma 4 model to your mini PC (this may take some time). Once complete, you'll see a >>> prompt indicating the model is ready.

- You can now chat with Gemma 4 directly in Command Prompt or the Ollama App. As a multi-modal llm, Gemma 4 supports not only text but also PDFs, images, and audio files in your prompts. While the latest Ollama App is already powerful and intuitive, pairing Gemma 4 with Open WebUI unlocks even greater functionality.

Step 3: Install Open WebUI using Docker

3.1 Install Docker Desktop

- Download Docker Desktop for Windows from: https://www.docker.com/products/docker-desktop/

- Run the installer and follow the instructions.

-Accept license terms

-Allow installation to enable WSL 2 if prompted

-Let the installer complete automatically

- Once the installation is complete, restart your mini PC.

- After the restart, launch Docker Desktop, skip sign in when prompted (not required for basic use)

- If you get the “WSL needs updating” message, simply copy the command wsl --update and past them in Command Prompt, then press Enter and wait for the update to complete.

- Once the update finishes, Docker Desktop should fully start, with a sidebar showing on the left (as illustrated in the image below).

3.2 Deploy Open WebUI

- Open Command Prompt again

- Copy and paste this command and press Enter:

docker run -d -p 4000:8080 --add-host=host.docker.internal:host-gateway -v open-webui:/app/backend/data --name open-webui --restart always ghcr.io/open-webui/open-webui:main

This command downloads the Open WebUI image and starts it as a container. Please wait patiently for the process to finish.

Step 4: Start Using Gemma 4 in Open WebUI

- Open your web browser (Chrome, Edge, or Firefox), and navigate to: http://localhost:4000

- Create an admin account with your preferred credentials (this account is local and does not require internet).

- After logging in, you'll see a ChatGPT-like interface, simply select Gemma 4 from the model dropdown menu, and start chatting with your local AI assistant.

- Go further: In Open WebUI Workspace, you can build custom models, create knowledge bases, and leverage Retrieval‑Augmented Generation (RAG) to process and organize your own data securely.

Conclusion

Congratulations! You now have a powerful local AI setup running on your Beelink mini PC. With Open WebUI and Gemma 4, you have a privacy-focused, offline-capable AI assistant that can handle document processing, coding help, research, and much more.

This setup gives you complete control over your AI environment without relying on cloud services, ensuring your data remains private and secure on your local machine.

Troubleshooting Tips

Common Issues and Solutions:

1.Docker Desktop won't fully start

- Ensure Windows Subsystem for Linux (WSL2) is enabled.

- Restart your Beelink mini PC after the installation of Docker Desktop.

2.Gemma 4 not appearing in Open WebUI

- Verify Ollama is running in the background.

- Restart the Open WebUI container.

- Check if the Gemma 4 model is properly downloaded in Ollama.

3.Port conflicts

- Ensure port 4000 is available

- Change the port mapping in the Docker command if needed